Brace for Impact

Why does matter dominate our universe?

April 30, 2010

Contact: Margie Wylie, mwylie@lbl.gov, +1 510 486-7421

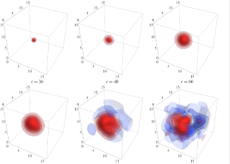

This selection of time-slices from a lattice QCD calculation shows the evolution of the proton correlation function over 7.02 septillionths of one second (7.02 X 10 24 seconds). To enhance detail, the colors—red being more positive and blue more negative—have been normalized on each time-slice. Information from these types of calculations enhances our understanding of nature of matter while helping scientists search for more exotic physics. (Courtesy of H. W. Linn, University of Washington)

While the fireworks at CERN's Large Hadron Collider (LHC) transfix the world, theorists are quietly doing some computational heavy lifting to help understand what these particle smash-ups might reveal about the fundamental mystery of existence: Why is there anything at all?

The Standard Model of particle physics can't explain why there exists more matter than antimatter in the universe. At the LHC and other colliders, scientists sift the debris of high-energy particle collisions searching for clues to physics that lie beyond our current understanding.

However, in order for scientists to "claim they've seen something beyond the Standard Model—a very important claim—they would need to know with quite high precision what the Standard Model predicts," said William Detmold, an assistant professor of physics at the College of William and Mary and senior staff scientist at the Department of Energy's Thomas Jefferson National Accelerator Facility. "That's what we try to calculate," said Detmold, whose group computes at NERSC.

Detmold works in the field of quantum chromodynamics (QCD), the mathematical theory describing the strong force that binds quarks together into protons, neutrons and other less-familiar subatomic particles (see "Particle Roll Call"). QCD also governs how particles interact with each other. Through a computational method called lattice QCD (LQCD), Detmold's team painstakingly calculates the characteristics of subatomic particles in various combinations.

Using NERSC systems, Detmold and colleagues, achieved the first ever QCD calculations for both a three-body force between hadrons and a three-body baryon system. They reported their findings in the journal Physical Review Letters. In a subsequent paper, Detmold and Martin Savage of the University of Washington also reported another first: a QCD calculation that could help scientists better understand the quark soup that was our universe milliseconds after its birth.

A better understanding of these interactions will help physicists build more accurate models of atomic nuclei. The findings also help scientists understand what they ought to see when certain particles collide. Anything outside those values could indicate new phenomena.

Know Thyself

This chart shows the change in the contribution to the radial quark-antiquark force at two pion densities. The attractive force is slightly reduced by the presence of a pion gas, similar to what has been observed in quark-gluon plasmas.

While Detmold's research may help form a jumping-off point for the discovery of exotic physics, he emphasizes that the work is confined to the Standard Model we know today: "The overall goal of our project is to provide a QCD-based understanding of the basics of nuclear physics, how protons and neutrons interact with each other and with other particles," Detmold said.

That's more easily said than done. Calculating the properties of subatomic particles is a fiendishly difficult business that requires billions of calculations consuming millions of processor hours. Particles can't simply be torn apart and studied quark by quark, their parts summed to make a whole. Instead, quarks exist in a seething, quantum soup of other quarks, antiquarks, and gluons, all of which must be taken into account (see "The ABCs of Lattice QCD"). Also, the quantum nature of quarks requires simulations to be run over and over (using random starting points) to derive average values.

"Ideally we'd simulate the whole nucleus of a carbon atom inside our computer and try to directly calculate from QCD its binding energy," said Detmold. Even with today's computing power and algorithms, that’s just not possible. "We're talking exascale-sized computations here," he said.

Instead, Detmold and colleagues substituted simpler proxies in their three-body interactions. One calculation used pions, the simplest composites formed from quarks in nature. Physicists can use these calculations to predict the properties of similar, but more complex structures, atomic nuclei.

Even at their simplest, however, LQCD calculations require massively parallel supercomputers: "Machines like Franklin are very important because they have a large amount of processing power, and the fact that they are highly parallel lets us do our calculations much faster and allows us to do calculations not possible on smaller computers," Detmold said. At NERSC, Detmold's calculations ran on 4,000 cores at once. In 2009 alone, Detmold's team consumed about 30 million processor hours on systems around the world, of which NERSC provided over a third (12 million).

The Search Goes On

Scientists at the Relativistic Heavy Ion Collider (RHIC) at Brookhaven National Laboratory recently reported that they had produced a quark-gluon plasma, the same cosmic soup that existed milliseconds after the Big Bang. The plasma itself lasted a tiny fraction of a second. A major part of the evidence for this exotic state of matter was that it emitted too few J/psi particles, the quark-antiquark pairs that shower from heavy particle collisions. This is known as J/psi screening.

"We wondered if the protons and neutrons not caught up in this plasma could also cause J/psi screening," Detmold said. Calculating a similar interaction with a lattice containing 12 pions, they found a similar J/psi screening effect, albeit to far lesser degree.

"At the RHIC, what's being collided are nuclei, which are all protons and neutrons. The pion system we're looking at is not the system that's there, but it's the simplest multi-hadronic system we can look at," said Detmold. "It indicates that at least some of the screening [at the RHIC] could be coming from hadrons," he concluded.

At NERSC, Detmold's group also concentrates on particles containing bottom-type quarks, called bottom baryons. One of the four major experiments at the LHC is dedicated to probing for novel physics among these particles. Detmold said: "Our studies will contribute an important ingredient in LHC searches for physics beyond the Standard Model in the bottom-baryon sector."

Particle Roll Call

Six kinds of quarks and eight types of gluons can interact to create a virtual particle zoo, but most matter is made of just two: the neutrons and protons that comprise an atomic nucleus. Protons and neutrons are in turn comprised of quarks (held together by the exchange of gluons).

However, physicists also use a panoply of categories and particle names that sound confusingly similar: hadrons, baryons, mesons, pions, and on and on. Below are defined some of the terms used in this article.

Quark: The smallest, discrete division of matter that we know of, quarks come in six varieties (whimsically named up, down, top, bottom, strange, and charm) and three "colors," which really aren't colors at all, but rather the properties that influence how quarks group.

Neutron & proton: The subatomic particles that comprise the nucleus of an atom. The neutron has no electrical charge, while the proton has a positive charge. Neutrons and protons are also baryons (have three quarks) and nucleons (because they comprise the nucleus of an atom).

Pion: The lightest naturally occurring hadron, the pion contains only a single quark and antiquark pair.

Bottom baryon: These particles contain bottom quarks and are a major area of investigation at the LHC and other particle colliders.

The ABC's of Lattice QCD

Lattice QCD is a computational method for working around the mathematical messiness of the quantum world.

Rather than try to calculate how a roiling soup of quarks, antiquarks, and gluons interact over all space, the method freezes those elements on a limited volume, four-dimensional, space-time grid or "lattice" in a controlled way. Quarks are stationed at each of the crosspoints or "nodes" on the grid, and forces are only calculated along the connections between nodes. QCD interactions within this spacetime lattice are calculated again and again using importance sampling until statistically valid averages emerge.

The size of the cube itself is minute (about two or three times the size of the proton in each direction in Detmold's experiments); yet the number of calculations required to advance a simulation by a fraction of a second can be staggering. Detmold used a lattice with 24 nodes along each spatial dimension and 64 time nodes. That's a total of more than 880,000 nodes and 30 million connections (each node has four connections and eight colors).

Lattice QCD was proposed in the 1970s, but until supercomputers powerful enough to do the necessary calculations came on the scene in the late 1990s, it has not been a precise calculation tool. Increasing compute power, combined with recent refinements in lattice QCD algorithms and codes, has enabled physicists to predict the mass, binding energy, and other properties of particles before they're measured experimentally, and to calculate quantities already measured, confirming the underlying theory of QCD.

Lattice QCD also allows physicists to research scenarios impossible to create experimentally, such as conditions at the heart of a neutron star, said Detmold. "Our understanding of the evolution of stars through supernovae and then neutron stars depends on how neutrons and lambda baryons, for example, interact," said Detmold. "Experimentally, this is very hard to study, but in our models, we just change a line of code," he said.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.