NERSC Targets Exascale with Perlmutter and the Exascale Science Applications Program

October 18, 2021

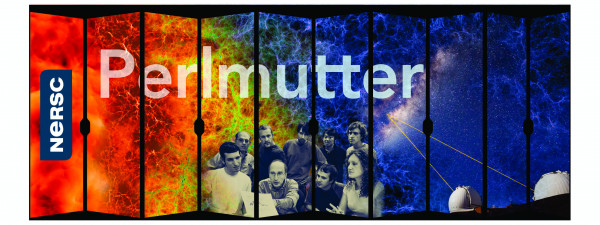

With an eye toward the first generation of exascale computing, in 2021 the National Energy Research Scientific Computing Center (NERSC) at Lawrence Berkeley National Laboratory formally unveiled its newest supercomputer, Perlmutter.

Perlmutter features a heterogeneous architecture that makes it among the fastest supercomputers in the world for scientific simulation, data analysis, and artificial intelligence applications. It will be heavily used in studies of the climate, environment, clean energy technologies, semiconductors, microelectronics, and quantum information science and is expected to help reveal new discoveries in cosmology, microbiology, genetics, material sciences, “and pretty much any other field you can think of," said Dr. Saul Perlmutter, the Nobel Prize-winning astrophysicist for whom the system is named.

The Perlmutter system, an HPE Cray EX supercomputer, is being delivered to NERSC in two phases. Phase 1, deployed in June 2021, includes 1,536 GPU-accelerated nodes, each containing four NVIDIA NVlink-connected A100 Tensor Core GPUs and one 3rd Gen AMD EPYC™ processor. Phase 1 also has a 35 PB all-flash Lustre file system that will provide very high-bandwidth storage. Phase 2, set to arrive later in 2021, will add 3,072 CPU-only nodes, each with two 3rd Gen AMD EPYC™ processors and 512 GB of memory per node.

To ensure that its users can readily utilize this new technology, for the last two years NERSC has been working with key development teams to prepare codes for Perlmutter and the coming exascale systems through its NERSC Exascale Science Applications Program (NESAP). NESAP is a collaborative effort that connects NERSC partners with code teams, vendors, and library and tools developers to prepare for advanced architectures and new computing systems.

Since NESAP was first established in 2014, the program has collaborated closely with ECP, incorporating six ECP applications into the initiative. Here are updates on five of those projects, including achievements to date and next goals.

EXAALT

The Exascale Atomistic Capability for Accuracy, Length, and Time (EXAALT) project is developing an exascale-scalable molecular dynamics simulation platform that will allow users to choose a point in accuracy, length, and time-space that is most appropriate for the problem at hand, trading the cost of one over the other. The project uses classical models like Spectral Neighbor Analysis Method (SNAP), a computationally intensive interatomic potential in the Large-scale Atomic/Molecular Massively Parallel Simulator (LAMMP), a molecular dynamics code with a focus on materials modeling.

A key focus of EXAALT is to improve the performance of SNAP potential in LAMPP on newer generation CPUs and GPUs. While SNAP had a GPU implementation, its performance relative to the peak performance showed a steady decline on new CPU and GPU hardware. To address this issue the team joined NESAP and attended the Center of Excellence hackathon in January 2019. Since then, they have made several advances:

- TestSNAP, a new stand-alone mini-app mimicking the workload of SNAP in LAMMPS, was created for rapid prototyping of various optimization strategies. This gave several collaborators an opportunity to contribute to the application without the need for any LAMMPS-specific knowledge.

- A refactor of the algorithm was performed that included kernel fission. Breaking one large GPU kernel into multiple smaller kernels allowed optimizations targeted towards specific stages of the algorithm. However, this increased the memory footprint of the algorithm since intermediate results now needed to be stored in between kernels.

- The team implemented new algorithmic improvements to reduce the memory overheads incurred due to the refactor, allowing the ECP KPP problem size of the refactored implementation to fit inside a single GPU memory.

- Scratch memory, the low-latency memory available on GPUs, was used to store intermediate results, which allowed the team to exploit symmetries in the algorithm and optimize by reducing the number of global read/write operations that arose from the new algorithm.

- All the optimizations tested in the mini-app are now part of the upstream LAMMPS/SNAP.

“Over the last three years, our new algorithm and implementation achieved a 26x speedup compared to the initial implementation of LAMMPS/SNAP from January 2019 on NVIDIA-V100 GPU,” said Rahulkumar Gayatri, an application performance specialist at NERSC whose current projects include EXAALT.

ExaBiome

The increasing sizes and complexities of datasets make a strong case for exascale-capable metagenome assemblers. However, the underlying algorithmic motifs are not well suited for GPU architectures. This poses a challenge because the majority of next-generation supercomputers will rely primarily on GPUs for most of their computational abilities.

The Exascale Solutions for Microbiome Analysis project (ExaBiome) – a collaborative effort that includes researchers from Berkeley Lab’s NERSC and CRD divisions, Los Alamos National Laboratory, and the Joint Genome Institute – was established to help address the challenges. The project is using machine learning algorithms and MetaHipMer, a high-performance metagenome assembler based on the whole genome assembler Meraculous, to study microbial diversity.

Among the project’s achievements to date, MetaHipMer is now GPU-ready. This was a challenging task because, unlike a typical science simulation, the algorithms used in DNA assembly do not work well on GPU architectures. As a result of close collaboration between scientists and performance engineers to redesign and re-implement some portions of the pipeline, three out of five of MetaHipMer’s modules can now take advantage of GPUs.

In addition, with the help of the MetaHipMer pipeline on the Summit system at OLCF, the ExaBiome team has assembled a metagenomic dataset of 25TB, the largest microbiome dataset ever assembled, and cut the total runtime in half on Summit. This JGI user data contained freshwater lake samples from a 17-year period, offering new insights into the evolution of microbial communities over time. Preparations are under way to assemble an even larger dataset that would require using an exascale machine like Frontier to its limits.

“GPU optimizations in MetaHipMer will benefit computations on Perlmutter as well as Frontier and Aurora,” said Muaaz Awan, an application performance specialist at NERSC and a member of the ExaBiome team. “We have already started the porting efforts and hope to complete them before Frontier becomes available for production runs.”

ExaFEL

The primary objective of the Data Analytics at the Exascale for Free Electron Lasers (ExaFEL) project - part of both ECP and NESAP for Data – is to leverage exascale computing to reduce, from weeks to minutes, the time needed to analyze x-ray diffraction data generated by experimental facilities such as the Linac Coherent Light Source. The objective of this data analysis is to understand the (yet unknown) molecular structure and function of samples, such as COVID-19 viral proteins, and the molecules involved in photosynthesis.

While ExaFEL covers a range of data projects, at present the focus is on porting two code bases to GPUs: multi-tiered iterative phasing (MTIP) for fluctuation x-ray scattering, and the computational crystallography toolbox (CCTBX), an open source code designed to advance automation of macromolecular structure determination. The project team is preparing both codes to make efficient use of next-generation supercomputers – initially Perlmutter, Frontier, and Aurora - and GPUs. Because Perlmutter is using NVIDIA GPUs, Frontier is using AMD GPUs, and Aurora is using Intel GPUs, the team is working to ensure that the code they are developing is portable. For both CCTBX and MTIP, this involves using software packages such as Kokkos that make code portability relatively easier for developers.

In addition, the collaborators are looking for ways to off-load as many computational tasks to the GPUs as possible to make continuous efficient use of the hardware by distributing the data so the GPUs always have something to do, explained Johannes Blaschke, an applications performance specialist at NERSC and the NESAP liaison for the ExaFEL project. “We are trying to get a really weak signal out of a mountain of data, so we need to find ways for all of this complex data analysis to work at scale on a supercomputer,” he said.

Subsurface Applications

“An Exascale Subsurface Simulator of Coupled Flow, Transport, Reactions and Mechanics” is an ECP Subsurface project focused on developing a new multiphysics application code to model and solve flow, transport, reactions, and mechanics in wellbore cement to assess the risk of fracture evolution on wellbore failure.

In addition to developing this multiphysics capability, the project is developing a performance portability strategy for the application code Chombo-Crunch, a high-resolution pore scale subsurface simulator based on the Chombo software libraries supporting structured grid, adaptive, finite volume methods for numerical PDEs. Chombo-Crunch, the underlying application code, is one of the codes targeted by the NESAP program and incorporates AMR. For this effort the project is developing the Proto C++ portability layer. A Proto-enabled backend, Chombo4, is being developed for adoption of Proto in Chombo-Crunch.

Multiphysics coupling for these types of problems requires high resolution on large aspect ratio domains, requiring more computational resources than are available on current supercomputers. Also, new supercomputing architectures like Perlmutter and Frontier contain hardware accelerators that require software engineering of legacy application codes for efficient execution and optimized performance. Success of the ECP Subsurface application code development project will depend on performance portability development in Proto and Chombo4 that is supported by the NESAP program.

The project’s key achievement to date is the development of the Proto middleware approach for point-wise and stencil-based operations on regular and irregular grids in Chombo4 applications. The portability has been done on NVIDIA GPUs and AMD GPUs; so far, it has been demonstrated that Chombo4 applications with the Proto backend can execute on 4,096 NVIDIA V100 GPUs on Summit.

WDMApp

The goals of the ECP Whole Device Model Application (WDMApp) project are to predict performance of the edge plasma in ITER – the world's largest fusion experiment – using a core-edge coupled kinetic-code framework and to achieve 50+ enhancements in the scientific problem size on exascale computers compared to what was achieved on Titan. For the core plasma that releases exhaust fusion heat to the edge plasma, the team is using the core-optimized code GENE or GEM. For the edge plasma that accepts the exhaust heat from the core plasma and builds up a pressure pedestal on which the core plasma sits on, the team is using the edge-optimized code XGC.

Due to the non-equilibrium multiscale nature of the edge plasma in complex geometry, XGC’s particle time-advance and Fokker-Planck collision kernels dominate the performance of the core-edge coupled WDMApp code. These two kernels must be ported and well-optimized on new architectures and programming models. Exhaust heat from core to edge is mostly carried by plasma turbulence. Kinetic coupling of the plasma turbulence across the core-edge coupling interface has been achieved to date in collisionless plasma, which evaluates the core-to-edge heat flow seamlessly. The coupled codes fully utilizes the V100 GPUs on Summit.

The project’s next goal is to include Fokker-Planck collisions in the coupled simulation so that the collisional modification of the turbulence and heat flux can be captured. The goal in the NESAP program is to port and optimize the Fokker-Planck kernel on A100 GPUs and optimize the coupled WDMApp simulation on Perlmutter with more realistic collision effects. The performance-dominant XGC code has been converted from Fortran to C++ and made to utilize Kokkos for portable GPU off-loading. The knowledge gained on the Perlmutter architecture will be utilized in achieving WDMApp’s exascale goal.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.