New Climate Models Reveal Geographical Link Between Wildfires and Extreme Weather

December 13, 2022

By Joseph Gertler

Contact: cscomms@lbl.gov

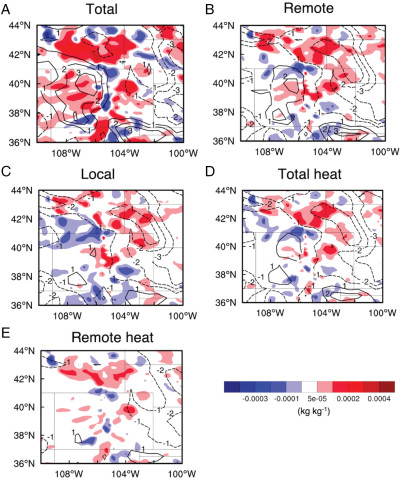

Figure 1. Differences in moisture (shaded color) and wind speed (contour lines) in the Central U.S. due to (A) total wildfire effect, (B) remote wildfire effect, (C) local wildfire effect, (D) total heat effect, and (E) remote heat effect during the latter two storm periods. Solid contour lines denote increased wind speeds; dashed lines denote decreased wind speeds. Credit: Jiwen Fan, PNNL

For longtime residents in the Western U.S., wildfires are nothing new in terms of natural disasters that have begun to occur all too often. The same can be said of extreme weather events in other parts of the U.S., which are also becoming more common. But sometimes, things that appear to be isolated events can offer surprising insights for scientists studying the increasing impact of climate change.

One example of this can be seen in a new study that used supercomputing resources at the National Energy Research Scientific Computing Center (NERSC) to investigate a growing connection between wildfires in the Western U.S. and extreme weather in the Central U.S. A team of researchers led by scientists from Pacific Northwest National Laboratory (PNNL) ran a series of models on the Cori supercomputer at Lawrence Berkeley National Laboratory (Berkeley Lab) to examine the relationship between wildfires and frequencies of heavy rainfall and large hail.

They looked specifically at the influence of Western U.S. wildfires on extreme precipitation and hailstones in the Central U.S. and found that heat and tiny airborne particles produced by these wildfires intensify severe storms distantly - in some cases bringing baseball-sized hail, heavier rain, and flash flooding to “downwind” states like Colorado, Wyoming, Nebraska, Kansas, Oklahoma, and the Dakotas. This stark contrast can be measured based on differences in wind speed and moisture, as illustrated in figure 1. Different panels in the figure show the contributions of the factors of interest to the changes in the wind speed and moisture.

Computationally Demanding Research

Typically, western wildfires and storms in the Central U.S. are separated by seasons. As Western U.S. blazes begin earlier each year, however, the two events now occur closer together.

”I think the most alarming part of the research we’ve conducted here relates to how wildfires have the potential to enhance the severity of the storms,” stressed Jiwen Fan of PNNL, lead researcher on the study, which was published Oct. 17 in the journal Proceedings of the National Academy of Sciences. “We’ve deduced that without the wildfires, the hailstorms would still be happening, but they would not be as intense.”

Fan and her team used data describing the storms’ hailstones and rain levels, as well as the fires and smoke plumes, to explore a possible mechanism behind the connection. The group also applied weather models that track heat and smoke particles to explore how the fires could remotely influence weather. They relied on a combination of the Fortran-based Weather Research and Forecasting model and Python for data processing.

“This is very computationally demanding work, which means we need lots of computer hours for our model simulations and processing. As such, the study happened through NERSC, and without it we could not have possibly done such work,” Fan elaborated. “For future climate studies, we need to pay attention to concurrency of extreme events because two concurrent events could interact and make one another more severe. This is something we need to study more because it would be happening more often under climate change.”

Fan also stressed the importance of computing power and the necessity to continue pushing toward more powerful systems. “Accurately simulating extreme weather and the impact of climate change needs high-resolution at convection-permitting scales with a complete set of physical processes considered, but such simulations at the global scale are really computationally challenging,” she said. “Our group currently only does such high-resolution work over a limited area. It's still too costly computationally to do such simulations in the ensemble manner with the computer resources that we currently have.”

The emerging era of exascale supercomputing should help. “Currently, we are limited by the architecture of the generation of computing we currently reside in,” Fan said. “The next-generation architectures will help our research greatly by letting us run model simulations at a larger quantity and a faster rate. These two factors essentially are what bottlenecks the rate at which we can process data using our modeling systems.”

The work done at PNNL is invaluable for new climate-based breakthroughs in terms of insights and model simulations, and it offers a preview of what supercomputing and data science in climate research will look like in the near future and beyond. “We will use the advanced code we developed to continue ratifying the impact of climate change at a macroscopic scale,” Fan concluded.

NERSC is a U.S. Department of Energy Office of Science user facility.

This article includes information from a PNNL news release.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.