Chombo-Crunch Sinks Its Teeth into Fluid Dynamics

Decade of Development Yields Novel Code for Energy, Oil & Gas, Aerospace

May 11, 2015

Contact: Kathy Kincade, +1 510 495 2124, kkincade@lbl.gov

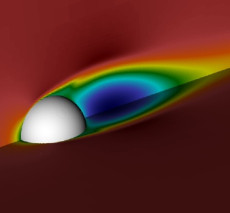

Using Chombo-Crunch to study turbulent flow past a sphere could help aerospace engineers optimize takeoff and landing patterns through more accurate prediction of aircraft wakes. Simulation: David Trebotich; VisIt

For more than a decade, mathematicians and computational scientists have been collaborating with earth scientists at Lawrence Berkeley National Laboratory (Berkeley Lab) to break new ground in the modeling of complex flows in energy and oil and gas applications.

Their work has yielded a high-performance computational fluid dynamics (CFD) and reactive transport code dubbed “Chombo-Crunch” that could enhance efforts to develop carbon sequestration as a way to address the Earth’s growing carbon dioxide challenges. It could also lead to new safety measures in the oil and gas industry and aerospace engineering.

Chombo-Crunch—the brainchild of David Trebotich, a computational scientist in the Computational Research Division (CRD), and Carl Steefel, a computational geoscientist in the Earth Sciences Division (ESD)—has its algorithmic roots in LDRD-funded microfluidics research Trebotich was involved in back in 2002 while he and Steefel were at Lawrence Livermore National Laboratory. At the time, there was national interest in developing miniaturized microfluidic devices to detect chemical attacks via air samples, so Trebotich began modeling flow in a packed bed design—a small cylinder filled with spheres (essentially an engineered porous medium) that could function as a flow-through device. The challenge was to overcome the limitations of traditional finite difference methods (FDMs) used in simulating complex geometries.

“I was interested in modeling flow and transport in microfluidic devices,” Trebotich said. “Up to that time we had been using high-resolution FDMs to solve partial differential equations. But they are limited when it comes to modeling flow in and around geometry, especially in the complex pore space that you might experience in an engineered microdevice or the subsurface.”

Using CFD tools based on embedded boundary technology, Trebotich found that he could determine the optimal flow conditions for capturing a polymer molecule by fluid mechanical forces alone. This work, coupled with Steefel’s vision for using the finite volume approach to perform direct numerical simulation of reactive transport at the pore scale, laid the foundation for Chombo-Crunch.

Chombo-Crunch addresses the limitations of FDMs in simulating complex geometries by combining two sets of code in an embedded boundary approach: Chombo, a suite of multiscale, multiphysics simulation tools for solving applied PDEs such as the equations that describe fluid dynamics and species transport in complex geometries; and CrunchFlow, a code that provides mixed equilibrium-kinetic treatment of geochemical reactions, developed by Steefel and interfaced to Chombo by Trebotich and Sergi Molins of Berkeley Lab’s Earth Sciences Division. Together they are able to provide more detailed analysis and modeling of complex flows and subsurface reactive transport than previously possible using lab experiments or larger scale models.

“The embedded boundary, or cut cell, approach allows for explicit representation of a material boundary and, in particular, reactive surface area of a natural mineral,” Trebotich explained.

Broad Range of Applications

For the last 12 years Trebotich has been testing and tweaking the simulation engine that is under the hood of Chombo-Crunch--a Chombo-based flow and species transport solver--including running some impressive carbon sequestration simulations last year at Berkeley Lab’s National Energy Research Scientific Computing (NERSC) and the Oak Ridge Leadership Computing Center. More recently he’s been looking at new geometry-based applications that can benefit from this CFD simulation prowess, primarily in energy and oil and gas.

“We’ve done a lot of performance optimization on the code over the last decade,” Trebotich said, referring to a team of colleagues in CRD’s Applied Numerical Algorithms Group, including Dan Graves, Brian Van Straalen and Terry Ligocki. “With these optimizations, coupled with the discretization method, we are able to perform complex flow simulations at unprecedented scale and resolution.”

In a paper published May 15, 2015 in Communications in Applied Mathematics and Computational Science, Trebotich described how the robustness of the flow solver in Chombo can enhance CFD modeling for a broad range of flow geometries, from creeping flow in realistic pore space—such as the previous carbon sequestration work—to transitional turbulent flows past bluff bodies and turbulent pipe flow.

For example, the Berkeley Lab team used the CFD algorithm to study turbulent flow past a sphere, a model problem that Trebotich believes could help aerospace engineers optimize takeoff and landing patterns through more accurate prediction of aircraft wakes. This particular simulation was run at Argonne Leadership Computing Facility as part of an INCITE allocation awarded to Trebotich.

The high-resolution CFD simulator also helped shed new light on flow in the well following the Deepwater Horizon Macondo oil well explosion and fire in April 2010, which resulted in the deaths of 11 workers and caused a massive oil spill into the Gulf of Mexico. After the failure of the blowout preventer allowed oil and natural gas to gush into the Gulf at the sea floor, the Department of Energy established the Flow Rate Technical Group, comprising scientists from multiple national labs, to estimate the oil flow rate based on the physical properties and behavior of the oil and gas in the reservoir, the wellbore and seafloor attachments.

During May and June 2010, Berkeley Lab members of the Flow Rate Technical Group (Curt Oldenberg, Barry Freifeld, Karsten Pruess, Lehua Pan, Stefan Finsterle and George Moridis) extended the Berkeley Lab simulator TOUGH2 to model Darcy flow in the reservoir coupled to one-dimensional wellbore flow to estimate the oil and gas blowout flow rate. More recently, Trebotich investigated the two-dimensional oil flow in the well, accounting for the variable diameters of successive casing strings in the well. Trebotich’s simulations used high-resolution adaptive mesh refinement to reveal fine-scale recirculations in high-Reynolds number flow through casing expansions in the well below the blowout preventer.

“With the simulation we showed that not only could you get the right answer for bulk flow properties such as flow rate and pressure drop in a very long pipe, you could also get microscopic resolution near the blowout preventer that could possibly be used to help identify more exactly where the failure point was,” Trebotich said.

Simulation of resolved steady-state flow in fractured Marcellus shale based upon FIB-SEM imagery obtained by Lisa Chan at Tescan USA, processed by Terry Ligocki (Berkeley Lab), courtesy Tim Kneafsey (Berkeley Lab).

Fractured Shale Research

Another series of simulations showed how their modeling approach could help geoscientists address some of the unknowns of hydrofracturing, or “fracking.” Using the algorithm to solve the incompressible Navier-Stokes equations in complex geometries, Trebotich worked with Berkeley Lab geoscientist Tim Kneafsey to achieve the first-ever computer simulation of fully resolved flow in fractured shale from image data of rock samples. The ability to simulate flow in shales at high resolution could help geoscientists better understand the effects of fracking and develop safe and reliable methods for storing carbon dioxide underground.

“We just have a proof of concept here, but we can actually simulate flow in fractured shale and resolve flow features in microfractures,” Trebotich said. “These types of materials, which are a very difficult to model as it is because of the low porosity, can also display nanoporosity requiring an adaptive modeling approach, which our framework supports.”

The Chombo flow solver can handle a range of flows, from turbulent flow in simple geometries to creeping flow in more complex geometries that derive from image data, like real subsurface rock, he added.

“The key is having a robust approach to representing the boundary that results in a more tractable way of gridding these complex domains and is consistent with discretization methods like the finite volume method we described in the CAMCoS paper," Trebotich said. "Coupled with adaptive mesh refinement, our embedded boundary approach is a powerful tool for doing high-performance, multiscale, multiphysics simulations.”

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.