A Computational Science Approach for Analyzing Culture

February 18, 2010

Contact: Linda Vu, lvu@lbl.gov, +1 510 486 2402

Watch Jeremy Douglass talk about Cultural Analytics: http://youtu.be/-YlT1qFhJhk

Just as photography revolutionized the study of art by allowing millions of people all over the world to scrutinize sculptures and paintings outside of museums, researchers from the Software Studies Initiative at the University of California at San Diego (UCSD) believe that a new paradigm called cultural analytics will drastically change the study of culture by allowing people to quantify evolving trends across time and countries.

Inspired by scientists who have long used computers to transform simulations and experimental data into multi-dimensional models that can then be dissected and analyzed, cultural analytics applies similar techniques to cultural data. With an allocation on the Department of Energy's National Energy Research Scientific Computing Center's (NERSC) supercomputers and help from the facility's analytics team, UCSD researchers recently illustrated changing trends in media and design across the 20th and 21st centuries via Time magazine covers and Google logos.

"The explosive growth of cultural content on the web, including social media together with digitization efforts by museums, libraries and companies, make possible a fundamentally new paradigm for the study of both contemporary and historical cultures," says Lev Manovich, Director of the Software Studies Initiative at the University of California, San Diego.

"The cultural analytics paradigm provides powerful tools for researchers to map subjective impressions of art into numerical descriptors like intensities of color, texture and shapes, as well as the organization of images using classification techniques," says Daniela Ushizima of the NERSC Analytics team, who contributed pattern recognition codes to the cultural analytics image-processing pipeline.

Manovich's research, called "Visualizing Patterns in Databases of Cultural Images and Video," is one of three projects currently participating in the Humanities High Performance Computing Program, an initiative that gives humanities researchers access to some of the world’s most powerful supercomputers, typically reserved for cutting-edge scientific research. The program was established in 2008 as a unique collaboration between DOE and the National Endowment for the Humanities.

"For decades NERSC has provided high-end computing resources and expertise to thousands science users annually. These resources have contributed to a number of science breakthroughs that have improved our understanding of nature. By opening up these computing resources to humanities, we will also gain a better understanding of human culture and history," says NERSC Division Director Kathy Yelick.

Visualizing Cultural Changes in Time Covers and Google logos

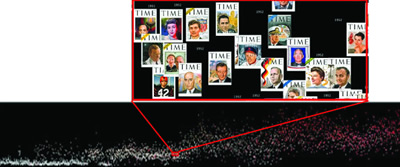

Figure 1. TIME OVER TIME: (Bottom Image) This is a graph of all the Time magazine covers of the 20th century. (Top Image) This is a high resolution cutout from the image below. Click on the image to download a high-resolution version of the graph. FULL SIZE IMAGE

As relatively inexpensive hardware and software empowers libraries, museums and universities to digitize historical collections of art, music and literature, and as masses of people continue to create and publish their own movies, music and artwork on the Internet, Manovich predicts that the biggest challenge facing cultural analytics will be securing enough computing resources to process, manage and visualize this data at a high-enough resolution for analysis.

Over the past few months, Manovich and his collaborators leveraged the expertise of NERSC's analytics team to help them to develop a pipeline for processing cultural images and to scale up their existing codes to run on NERSC's high-end computing systems. To test their pipeline, the team mapped out all 4,553 covers of Time magazine from 1923 to 2008 and all the Google logos that have been published on the search engine's homepage around the world from 1998 to 2009.

In the Time magazine visualization (Fig. 1), the X-axis represents time in years, from the beginning of the 20th century shown on the left to the early 21st century on the right. The Y-axis measures the brightness and saturation hue of each cover, with the most colorful covers appearing toward the top.

"Visualizing the Time covers in this format reveals gradual historical changes in the design and content of the magazine," says Manovich. "For example, we see how color comes in over time, with black and white and color covers co-existing for a long period. Saturation and contrast of covers gradually increases throughout the 20th century—but surprisingly, this trend appears to stop toward the very end of the century, with designers using less color in the last decade."

He notes that there are also various changes in magazine content revealed by the visualization. "We see when women and people of color start to be featured, how the subjects diversify to include sports, culture, and topics besides politics, and so on. Since our high-resolution visualizations show the actual covers rather than using points or other graphical primitives typical of standard quantitative graphics, a single visualization reveals many trends at once; it is also accessible to a wider audience than statistical graphs," adds Manovich.

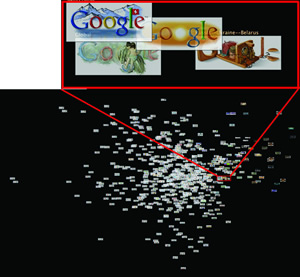

Figure 2. ANALYZING GOOGLE: (Bottom) This graphic shows hundreds of Google logos that have appeared on the search engine's homepage all over the world from 1998 to the present. (Top) This is a high-resolution cutout from the image below. Click on the image to download a high-resolution version of the graph. FULL SIZE IMAGE

Using NERSC computers, Jeremy Douglass, a post-doctoral researcher with Software Studies, also applied cultural analytics techniques to hundreds of Google logos that have appeared on the search engine's homepage all over the world from 1998 to the present (fig. 2). Artists periodically reinterpret logos on the Google homepage to mark a cultural milestone, a holiday or a special occasion. In the visualization, the X-axis measures how much the various logos deviate from the original design. Images toward the left show very little modification and those toward the right have been significantly modified. Meanwhile, the images toward the bottom of the Y-axis illustrate artistic changes that affect the bottom of the word "Google," and those toward the top show changes toward the upper portion of the word.

"Google logos are relatively small, and there have so far only been less than 600 of them, so the act of rendering full-resolution maps is quite doable with a desktop workstation. However, we were interested in using data exploration to tackle ideas about visual composition and statistical concepts like centroid, skewness, kurtosis, and so forth," says Douglass.

To explore how these and other concepts might contribute to mapping the "space of aesthetic variation," Douglass notes that the team needed to experiment. "That's where the ability to iterate with NERSC's analytics team becomes so important. When you want to repeatedly re-render 4553 high-resolution images and be able to see how they evolve over time according to various features, 'How long will this take?' become a very big deal, and there's no such thing as too much power," he adds.

The cultural analytics team ultimately hopes to create tools that will allow digital media schools and universities to compare hundreds of thousands of videos and images in real time to facilitate live discussions with students. They also hope to create visualizations that will measure in many millions of pixels and show patterns across tens of millions of images. Although they are currently only beginning to develop software for cultural analytics, Manovich says the NERSC runs showed him that supercomputers are a "game changer" and will be vital to achieving this goal.

"Datasets that would take us months to process on our local desktop machines can be completed in only a few hours on the NERSC systems, and this significantly speeds up our workflow," says Manovich. "The NERSC analytics team has been incredibly helpful to our work. In addition to helping us develop the technical tools to process our data, they also share in our excitement, often sending us information that might be useful to the project."

Currently Manovich and his collaborators are using NERSC computers to analyze and visualize patterns across 10 million comic book images from around the globe.

"Contemporary culture is constantly evolving with the advancement of technology. Billions of pictures, video and audio files are uploaded to the Internet every day by ordinary people all over the world. Cultural analytics is an emerging paradigm to make visible patterns contained in this ocean of media," says Manovich.

"Working with Lev Manovich gave me a lot to think about in terms of how high performance computing is underutilized in the humanities and how it could potentially accelerate knowledge discovery," says Janet Jacobsen of the NERSC analytics group. "All in all, this has been a really fun project to be involved with. These researchers are doing something that no one else in their field is doing."

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.