Magellan Explores Cloud Computing for DOE's Scientific Mission

March 30, 2010

Contact: Linda Vu, lvu@lbl.gov, 510-495-2402

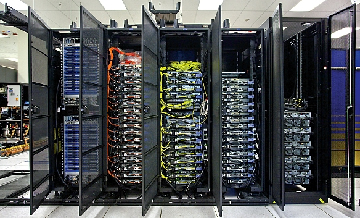

Cloud Control –This is a picture of the Magellan management and network control racks at NERSC. To test cloud computing for scientific capability, NERSC and the Argonne Leadership Computing Facility (ALCF) installed purpose-built testbeds for running scientific applications on the IBM iDataPlex cluster. (Photo Credit: Roy Kaltschmidt)

Cloud computing is gaining traction in the commercial world, with companies like Amazon, Google, and Yahoo offering pay-to-play cycles to help organizations meet cyclical demands for extra computing power. But can such an approach also meet the computing and data storage demands of the nation's scientific community?

A new $32 million program funded by the American Recovery and Reinvestment Act through the U.S. Department of Energy (DOE) will examine cloud computing as a cost-effective and energy-efficient computing paradigm for mid-range science users to accelerate discoveries in a variety of disciplines, including analysis of scientific datasets in biology, climate change, and physics.

DOE is a world leader in providing high-performance computing resources for science—with the National Energy Scientific Research Computing Center (NERSC) at Lawrence Berkeley National Laboratory to support the high-end computing needs of over 3,500 DOE Office of Science researchers and the Leadership Computing Facilities at Argonne and Oak Ridge National Laboratories serving the largest-scale computing projects across the broader science community through the Innovative and Novel Computational Impact on Theory and Experiment Program (INCITE). The focus of these facilities is on providing access to some of the world's most powerful supercomputing systems that are specifically designed for high-end scientific computing.

Interestingly, some of the science demands for DOE computing resources do not require the scale of these well-balanced petascale machines. A great deal of computational science today is conducted on personal laptops or desktop computers or on small private computing clusters set up by individual researchers or small collaborations at their home institution. Local clusters have also been ideal for researchers that co-design complex problem-solving software infrastructures for the platforms in addition to running their simulations. Users with computational needs that fall between desktop and petascale systems are often referred to as "mid-range" and may benefit greatly from the Magellan cloud projects.

In the past, mid-range users were enticed to set up their own purpose-built clusters for developing codes, running custom software or solving computationally inexpensive problems because hardware has been relatively cheap. However the cost incurred by ownership, including ever-rising energy bills, space constraints for hardware, ongoing software maintenance, security, operations and a variety of other expenses, are forcing mid-range researchers and their funders to look for more cost-efficient alternatives. Some experts suspect that cloud computing may be a viable solution.

Cloud computing refers to a flexible model for on-demand access to a shared pool of configurable computing resources (such as networks, servers, storage, applications, services, and software) that can be easily provisioned as needed. Cloud computing centralizes the resources to gain efficiency of scale and permit scientists to scale up to solve larger science problems while still allowing the system software to be configured as needed for individual application requirements. To test cloud computing for scientific capability, NERSC and the Argonne Leadership Computing Facility (ALCF) will install similar mid-range computing hardware, but will offer different computing environments. The systems will create a cloud test bed that scientists can use for their computations while also testing the effectiveness of cloud computing for their particular research problems.

Since the project is exploratory, it has been named Magellan in honor of the Portuguese explorer who led the first effort to sail around the globe. It is also named for the Magellanic Clouds, which are the two closest galaxies to our Milky Way and visible from the Southern Hemisphere.

What is Cloud Computing?

Networking. When completed, the Magellan system at NERSC will be interconnected using QDR, 10 Gbps Ethernet, multiple 1 Gbps Ethernet, and 8 Gbps fiber channel SAN. (Photo Credit: Roy Kaltschmidt)

In the report "Above the Clouds: A Berkeley View of Cloud Computing" a team of luminaries from the Electrical Engineering and Computer Sciences Department at the University of California,Berkeley noted that cloud computing refers to both the applications delivered as services over the Internet and the hardware and systems software in the datacenters that provide those services. The services themselves have long been referred to as software as a service (SaaS). The datacenter hardware and software is referred to as a cloud.

The advantages of SaaS to both end users and service providers are well understood. Service providers enjoy greatly simplifed software installation and maintenance and centralized control over versioning; end users can access the service “anytime, anywhere,” share data and collaborate more easily, and keep their data stored safely in the infrastructure. Cloud computing does not change these arguments, but it does give more application providers the choice of deploying their product as SaaS without provisioning a datacenter: just as the emergence of semiconductor foundries gave chip companies the opportunity to design and sell chips without owning a semiconductor fabrication plant, cloud computing allows deploying SaaS – and scaling on demand – without building or provisioning a datacenter.

Mid-Range Users on a Cloud

Realizing that not all research applications require petascale computing power, the Magellan project will explore several areas:

- Understanding which science applications and user communities are most well-suited for cloud computing (See related article “Metagenomics on a Cloud?” for more.)

- Understanding the deployment and support issues required to build large science clouds. Is it cost effective and practical to operate science clouds? How could commercial clouds be leveraged?

- How does existing cloud software meet the needs of science and could extending or enhancing current cloud software improve utility?

- How well does cloud computing support data-intensive scientific applications?

- What are the challenges to addressing security in a virtualized cloud environment?

By installing the Magellan systems at two of DOE’s leading computing centers, the project will leverage staff experience and expertise as users put the cloud systems through their paces. The Magellan test bed will be comprised of cluster hardware built on IBM’s iDataplex chassis and based on Intel’s Nehalem CPUs and QDR InfiniBand interconnect. Total computer performance across both sites will be on the order of 100 teraflop/s.

Currently, one of the challenges in building a private cloud is the lack of software standards. As part of the Magellan project, researchers at ALCF and NERSC will look into Adaptive's Cloud Computing Suite, which allows them to maintain performance by managing clouds with non-virtual nodes, deploying private clusters for specific groups and doing runtime provisioning of software images to allow project-optimized software environments. Both centers will also explore other packages like Nimbus and the open-source Eucalyptus toolkit, as well as Apache’s Hadoop and Google’s MapReduce, two related software frameworks that deal with large distributed datasets. Although these frameworks are not widely supported at traditional supercomputing facilities, large distributed datasets are a common feature of many scientific codes and are natural fits for cloud computing.

By making Magellan available to a wide range of DOE science users, the researchers will be able to analyze the suitability for a cloud model across the broad spectrum of the DOE science workload. They will also use performance-monitoring software to analyze what kinds of science applications are being run on the system and how well they perform on a cloud. The science users will play a key role in this evaluation as they bring a very broad scientific workload into the equation and will help the researchers learn which features are important to the scientific community.

Data Storage and Networking

To address the challenge of analyzing the massive amounts of data being produced by scientific instruments ranging from powerful telescopes photographing the Universe to gene sequencers unraveling the genetic code of life, the Magellan test bed will also provide a storage cloud with a little over a petabyte of capacity.

The NERSC Global File (NGF) system will provide most storage needs for projects running on the NERSC portion of the Magellan system. Approximately 1 PB of storage and 25 gigabits per second (Gbps) of bandwidth have been added to support use by the test bed. Archival storage needs will be satisfied by NERSC's High Performance Storage System (HPSS) archive, which is being increased by 15 PB in capacity. Meanwhile, the Magellan system at ACLF will have 250 TB of local disk storage on the compute nodes and additional 25 TB of global disk storage on the GPFS system.

NERSC will make the Magellan storage available to science communities using a set of servers and software called Science Gateways, as well as experiment with Flash memory technology to provide fast random access storage for some of the more data-intensive problems. Approximately 10 TB will be deployed in NGF for high-bandwidth, low-latency storage class and metadata acceleration. Around 16 TB will be deployed as local SSD in one SU for data analytics, local read-only data and local temporary storage. Approximately 2 TB will be deployed in HPSS. The ALCF will provide active storage, using HADOOP over PVFS, on approximately 100 compute/storage nodes. This active storage will increase the capacity of the ALCF Magellan system by approximately 30 TF of compute power, along with approximately 500 TB of local disk storage and 10 TB of local SSD. The NERSC and ALCF facilities will be linked by a groundbreaking 100 Gbps network, developed by DOE’s Energy Sciences Network (ESnet) with funding from the American Recovery and Reinvestment Act. Such high bandwidth will facilitate rapid transfer of data between geographically dispersed clouds and enable scientists to use available computing resources regardless of location.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.