All NERSC Production Systems Now on the Grid

February 1, 2004

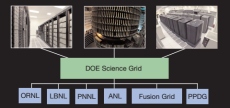

With the new year came good news for NERSC users – all of NERSC’s production computing and storage resources are now Grid-enabled and can be accessed by users who have Grid applications using the Globus Toolkit.

As a result, Grid users now have access to NERSC’s IBM supercomputer (“Seaborg”), HPSS, the PDSF cluster, the visualization server “Escher,” and the math server “Newton.” Users can also get support for Grid-related issues from NERSC’s User Services Group.

“Now that the core functionalities have been addressed, our next push is to make the Grid easier to use and manage, for both end users and the administrators who handle applications that span multiple sites,” said Steve Chan, who coordinated the Grid project at NERSC.

One of the major challenges faced by NERSC’s Grid team was installing the necessary software on Seaborg, which operates using a complicated software stack. Additionally, the system needed to be configured and tested without interfering with its heavy scientific computing workload. By working with IBM and drawing upon public domain resources, Seaborg became accessible via the Grid in January.

“With Steve Chan’s leadership and the technical expertise of the NERSC staff, we crafted a Grid infrastructure that scaled to all of our systems,” said Bill Kramer, general manager of the NERSC Center. “As the driving force, he figured out what needed to be done, then pulled together the resources to get it done.”

To help prepare users, center staff presented tutorials at both the Globus World and Global Grid Forum, as well as presented training specifically for NERSC users. When authenticated users log into NIM (NERSC Information Management), they are now able to enter their certificate information into the account database and have this information propagated out to all the Grid enabled systems they normally access, superseding the need to have separate passwords for each system. The next step is for NIM to help non-credentialed users obtain a Grid credential.

Because the Grid opens up a wider range of access paths to NERSC systems, the security system was enhanced. Bro, the LBNL-developed intrusion detection system which provides one level of security for NERSC, was adapted to monitor Grid traffic at NERSC for an additional layer of security. The long-term benefits, according to Kramer, will be that NERSC systems are easier to use while security will be even higher.

“Of course, this is not the end of the project,” Kramer said. “NERSC will continue to enhance these Grid features in the future as we strive to continue providing our users with the most advanced computational systems and services.”

This accomplishment, which also required the building of new access servers, reflects a two-year effort by staff members. Here is a list of participants and their contributions:

Nick Bathaser built the core production Grid servers and has been working on making the myproxy server very secure.

Scott Campbell developed the Bro modules that are able to analyze the GridFTP and Gatekeeper protocols, as well as examine the certificates being used for authentication.

Shane Canon built a Linux Kernel module that can associate Grid certificates with processes, and helped deploy Grid services for the STAR and ATLAS experiments.

Steve Chan led the overall project and coordinated the effort, as well as developing and porting much of the actual software that created these abilities.

Shreyas Cholia built the modifications to the HPSS FTP daemon so that it supports GSI, making it compatible with GridFTP. He has also been working on the Globus

File Yanker, a Web portal interface for copying files between GridFTP servers.

Steve Lau works on the policy and security aspects of Grid computing at NERSC.

Ken Okikawa has been building and supporting Globus on Escher to support the VisPortal application.

R.K. Owen, Clayton Bagwell and Mikhail Avrekh of the NIM team have worked on the NIM interface so that certificate information gets sent out to all the cluster hosts.

David Paul is the Seaborg Grid lead, and has been building and testing Globus under AIX.

Iwona Sakrejda is the lead for Grid support of PDSF and developed training for all NERSC users, as well as supporting all the Grid projects within the STAR and ATLAS experiments.

Jay Srinivasan wrote patches to the Globus code to support password lockout and expiration control in Globus.

Dave Turner is the lead for Grid support for Seaborg users. He recently helped a user, Frank X. Lee, move a few terabytes of files from the Pittsburgh Supercomputing Center to NERSC’s HPSS using GridFTP.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.