Pinpointing the Magnetic Moments of Nuclear Matter

Lattice QCD Calculations Reveal Inner Workings of Lightest Nuclei

January 20, 2015

By Kathy Kincade

Contact: cscomms@lbl.gov

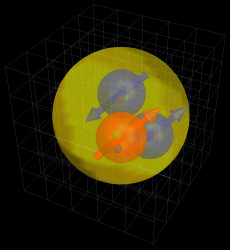

Artist's impression of a triton, the atomic nucleus of a tritium atom. The image shows blue neutrons and a red proton with quarks inside; the arrows indicate the alignments of the spins. Image: William Detmold, MIT

A team of nuclear physicists has made a key discovery in its quest to shed light on the structure and behavior of subatomic particles.

Using supercomputing resources at the U.S. Department of Energy’s (DOE) National Energy Research Scientific Computing Center (NERSC), located at Berkeley Lab, the Nuclear Physics with Lattice QCD (NPLQCD) collaboration demonstrated for the first time the ability of quantum chromodynamics (QCD)—a fundamental theory in particle physics—to calculate the magnetic structure of some of the lightest nuclei.

Their findings, published December 16, 2014 in Physical Review Letters, are part of an ongoing effort to address fundamental questions in nuclear physics and high-energy physics and further our understanding of the universe, according to the NPLQCD. The collaboration was established in 2004 to study the properties, structures and interactions of fundamental particles, including protons, neutrons, tritons, quarks and gluons—the subatomic particles that make up all matter.

“We are interested in how neutrons and protons interact with each other, how neutrons and strange particles interact with each other and more generally what the nuclear forces are,” explained Martin Savage, senior fellow in the Institute for Nuclear Theory and professor at the University of Washington, a founding member of the NPLQCD and a 10-year NERSC user. “If you want to make predictions for complex nuclear systems, you have to know what the nuclear forces are.”

While some of these interactions are well measured experimentally—such as the strength with which a neutron and a proton interact with each other at low energies—others are not. Savage and his colleagues believe a mathematical technique known as lattice QCD is the key to unlocking the remaining mysteries of nuclear forces.

The Theory of Almost Everything

QCD emerged in the 1970s as a theoretical component of the Standard Model of particle physics—our current mathematical description of reality. The Standard Model, sometimes described as “the theory of almost everything,” is used to explain electromagnetic, weak and strong nuclear interactions and to classify all known subatomic particles. The only force not described is gravity.

Lattice QCD is an approach to solving the QCD theory of quarks and gluons. Researchers use it to break up space and time into a grid (lattice) of points and then develop and solve equations connecting the degrees of freedom of those points, explained William Detmold, assistant professor of Physics at the Massachusetts Institute of Technology and a co-author on the Physical Review Letters paper.

“Lattice QCD is the implementation of QCD on the computer. It is the tool we use to solve QCD,” Detmold said. “And by solving QCD I mean calculating some observable quantity that we can compare with experiments.”

Lattice QCD was first proposed in the 1970s, but until computing systems powerful enough to do the necessary calculations came on the scene in the 1990s it wasn’t a precise calculation tool. Over the last two decades, however, increasing compute power and refinements in lattice QCD algorithms and codes have enabled physicists to predict the mass, binding energy and other properties of particles before they're measured experimentally and calculate quantities already measured.

“There are a number of really critical areas in nuclear physics and particle physics that require lattice QCD to succeed,” Savage said. “If you want to make predictions, for instance, of what happens on the inside of a collapsing star, what dictates whether a supernova collapses through to a black hole or ends up as a neutron star is determined by how soft or how hard nuclear matter is. We don’t have enough measurements that we can do on Earth, and the analytic theories currently aren’t good enough to determine what happens in those situations. Lattice QCD is a technique that will greatly improve our understanding of the nuclear interactions relevant to these matter densities.”

Magnetic Moment a ‘First Step’

In recent years the NPLQCD and other QCD research groups have made steady progress in their efforts to tease apart the behavior of subatomic particles. For the Physical Review Letters study, the NPLQCD team investigated the magnetic moments of neutrons, protons and the lightest nuclei: deuterons, tritons and helium-3 (3He). They used the Chroma lattice QCD code to run a series of Monte Carlo calculations on NERSC’s newest supercomputer, Edison, and demonstrated for the first time QCD’s ability to calculate the magnetic structure of nuclei.

“This is the first study where we ask the question about what the structure of the nucleus is, and the first study probing what nuclei are at the level of quarks,” Detmold explained. “If you can understand the magnetic moments and the more complicated magnetic structure of nuclei, you can come up with a topographical map of what, for example, the current distribution inside a nucleus actually is. The magnetic moment is a first step toward that.”

The NPLQCD team also discovered something quite unexpected, according to Savage: that the theoretical magnetic moments of these nuclei at unphysically large quark masses are remarkably close to those found in physical experiments.

“Ideally you want to be performing these calculations at the physical values of the quark masses so you can compare directly with an experiment and make predictions that would directly impact the experiment,” he said. “These are the very first calculations of nuclear magnetic moments and a first glimpse of nuclear structure to emerge from lattice QCD calculations.”

It is much more computationally difficult to mathematically model a nucleus like 3He than a single proton, Detmold added, and it was only possible to achieve these findings with the advent of the latest supercomputers. For this study the researchers used about 30 million CPU hours on 16,000 cores on Edison, a Cray XC30 that went into full production in early 2014. They also made use of some gauge configurations pre-computed in previous years, according to Detmold.

“NERSC resources are critical to our calculations,” Savage said. “The NERSC machines are able to run the largest jobs we presently need to undertake and are very effective computational platforms.”

Looking ahead, the researchers are eager to see what the continued evolution of supercomputing systems can help them achieve in their ongoing lattice QCD explorations.

“At some level this calculation [in the Physical Review Letters study] is a stepping stone to the calculation where the numbers we get should agree exactly with experimental examination of the magnetic moments,” Detmold said. “There is another step we would like to do if we have enough computing power to do it, and in a few years time there will be: to redo this calculation with the actual physical values of the quark masses.” Once this is accomplished, the same techniques will be used to make predictions for nuclear physics quantitites that are important but hard to measure experimentally, he added.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.