People

NERSC staff work in teams (groups) designed to serve users’ varied needs and to maintain the highest-quality supercomputing infrastructure possible.

For an overview, please consult our organizational chart.

Division director

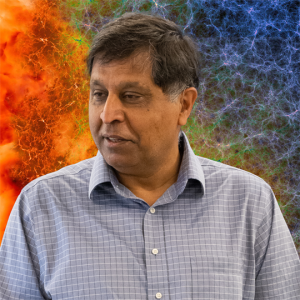

Sudip Dosanjh

Scientific Division Director

National Energy Research Scientific Computing Center (NERSC)

Division leadership team

Deborah Bard

NERSC Science Engagement and Workflows Department Head

National Energy Research Scientific Computing Center (NERSC)

Wahid Bhimji

Division Deputy for AI and Science and Group Leader

National Energy Research Scientific Computing Center (NERSC)

Data & AI Services Group

Hai Ah Nam

HPC Technology Department Head and NERSC-10 Project Director

National Energy Research Scientific Computing Center (NERSC)

Charles Schwartz

Computer Systems Manager 2

National Energy Research Scientific Computing Center (NERSC)

Storage Systems Group

Nicholas Wright

NERSC Chief Architect and Advanced Technologies Group Lead

National Energy Research Scientific Computing Center (NERSC)

Advanced Technologies Group

Group leaders

Wahid Bhimji

Division Deputy for AI and Science and Group Leader

National Energy Research Scientific Computing Center (NERSC)

Data & AI Services Group

Norm Bourassa

NERSC Energy & Water Efficiency Engineer

National Energy Research Scientific Computing Center (NERSC)

Building Infrastructure Group

Richard Canon

Group Leader

National Energy Research Scientific Computing Center (NERSC)

Data Science Engagement Group

Ravi Cheema

Computer Systems Engineer 4

National Energy Research Scientific Computing Center (NERSC)

Storage Systems Group

Tiffany Connors

Senior Cyber Security Engineer (CSE4)

National Energy Research Scientific Computing Center (NERSC)

Cyber Security Group

Brandon Cook

Programming Environment & Models Group Lead

National Energy Research Scientific Computing Center (NERSC)

Programming Environments & Models Group

Jack Deslippe

Application Performance Group Lead

National Energy Research Scientific Computing Center (NERSC)

Application Performance Group

Rebecca Hartman-Baker

User Engagement Group Lead

National Energy Research Scientific Computing Center (NERSC)

User Engagement Group

Eric Roman

Computer Systems Engineer 4

National Energy Research Scientific Computing Center (NERSC)

Computational Systems Group

Cory Snavely

Lead, Infrastructure Services Group

National Energy Research Scientific Computing Center (NERSC)

Infrastructure Services Group

Tavia Stone Gibbins

Computer Systems Engineer 3

National Energy Research Scientific Computing Center (NERSC)

Networking Group

Becci Totzke

Project Manager

National Energy Research Scientific Computing Center (NERSC)

Business Operations & Services Group

Cary Whitney

Computer Systems Engineer 3

National Energy Research Scientific Computing Center (NERSC)

Operations Technology Group

Nicholas Wright

NERSC Chief Architect and Advanced Technologies Group Lead

National Energy Research Scientific Computing Center (NERSC)

Advanced Technologies Group