Materials Science Simulation Achieves Extreme Performance at NERSC

September 7, 2022

By Elizabeth Ball

Contact: cscomms@lbl.gov

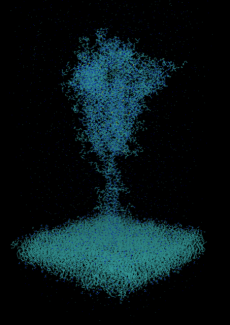

Simulation graphic of the COVID-19 spike protein simulated in aqueous solution, with the hydrogen and oxygen atoms removed.

Using the new Perlmutter system at the National Energy Research Scientific Computing Center (NERSC) at Lawrence Berkeley National Laboratory (Berkeley Lab), a team of researchers led by Paderborn University scientists Thomas D. Kühne and Christian Plessl used a new mixed-precision method to conduct the first electronic structure simulation that executed more than quintillion (1018) operations per second (exaops). The team’s mixed-precision method is well-suited to running on Perlmutter’s thousands of GPU processors.

Of the quintillion-operations milestone, Plessl said: “The dimension of this number becomes clearer when you consider that the universe is about 1018 seconds old. That means that if a human had performed a calculation every second since the time of the Big Bang, this calculation does the same work in a single second.”

Scientific simulations typically use “64-bit” arithmetic to achieve the high-precision results needed to represent physical systems and processes. The Paderborn team was able to show that some real-world problems of interest can use lower-precision arithmetic for some operations using their new method, a method that takes great advantage of the “tensor” cores on Perlmutter’s NVIDIA A100 GPU accelerators.

The calculation used 4,400 GPUs on Perlmutter to perform a simulation of the SARS-CoV-2 spike protein. Kühne and Plessl used the submatrix method they introduced in 2020 for the approximate calculations. In this method, complex chemical calculations are broken down into independent pieces performed on small dense matrices. Because it uses many nodes working on smaller problems at once — what computing scientists call parallelism — the method lends itself to efficiency and scaling up and down for differently sized uses.

“What’s neat about it is that it’s a method that’s inherently extremely parallel, so it’s extremely scalable,” said Plessl. “And that’s the reason we’re able to target the largest supercomputers in the world using this method. The other benefit of the method is that it’s very suitable for GPUs because it kind of converts a problem that is a sparse-matrix problem that is hard to solve on a CPU to a very parallel implementation where you can work on much smaller dense matrices. From a computer science perspective, I think it’s quite exciting.”

“However, people in the high-performance community have been a little bit critical about approximate approaches like our submatrix method,” said Kühne of the speed of their calculation. “It appeared nearly too good to be true, that is to say, we reached a very high degree of efficiency, allowing us to conduct complex atomistic simulations that were so far considered to be not feasible. Yet, having access to Perlmutter gave us the opportunity to demonstrate that it really works in a real application, and we can really exploit all the positive aspects of the technique as advertised, and it actually works.”

Kühne and Plessl approached NERSC after the June 2021 Top500 performance ranking of supercomputers ranked Perlmutter as number five in the world. There, they worked with Application Performance Specialist Paul Lin, who helped set them up for success by orienting them to the system and helping to ensure that their code would run smoothly on Perlmutter.

One major challenge, Lin said, was running complex code on such a new system, as Perlmutter was at the time.

“On a brand-new system, it’s both challenging but also especially exciting to see science teams achieve groundbreaking scientific discoveries,” said Lin. “These types of simulations also help the center tune the system during deployment.”

Kühne and Plessl ran their calculations using the code CP2K, an open-source molecular dynamics code used by many NERSC users and others in the field. When they’re finished, they plan to write up and release their process for using the code on NERSC so that other users can learn from their experience. And when that’s done, they’ll keep working on the code itself.

“We’re just in the process of defining road maps for the further development of the CP2K simulation code,” said Plessl. “We’re getting more and more invested in developing the code, and making it more GPU-capable, and also more scalable and for more use cases — so NERSC users will profit from this work as well.”

As for the record, it’s an exciting development and a glimpse of what Perlmutter will be able to do for all kinds of science research going forward.

“We knew the system was capable of one exaop at this precision level, but it was exciting to see a real science application do it, particularly one that’s a traditional science application,” said NERSC Application Performance Group Lead Jack Deslippe, who also helped oversee the project. “We have a lot of applications now that are doing machine learning and deep learning, and they are the ones that tend to have up to this point been able to use the special hardware that gets you to this level. But to see a traditional materials-science modeling and simulation application achieve this performance was really exciting.”

This story contains information originally published in a Paderborn University news release.

About NERSC and Berkeley Lab

The National Energy Research Scientific Computing Center (NERSC) is a U.S. Department of Energy Office of Science User Facility that serves as the primary high performance computing center for scientific research sponsored by the Office of Science. Located at Lawrence Berkeley National Laboratory, NERSC serves almost 10,000 scientists at national laboratories and universities researching a wide range of problems in climate, fusion energy, materials science, physics, chemistry, computational biology, and other disciplines. Berkeley Lab is a DOE national laboratory located in Berkeley, California. It conducts unclassified scientific research and is managed by the University of California for the U.S. Department of Energy. »Learn more about computing sciences at Berkeley Lab.